2-minute read

Quick summary: 11 proven best practices for optimizing Snowflake costs and preventing unpleasant surprises when your next invoice arrives while also maintaining performance. For a deeper dive into Snowflake cost optimization, download our complimentary white paper “Optimizing Snowflake costs: a practical guide.”

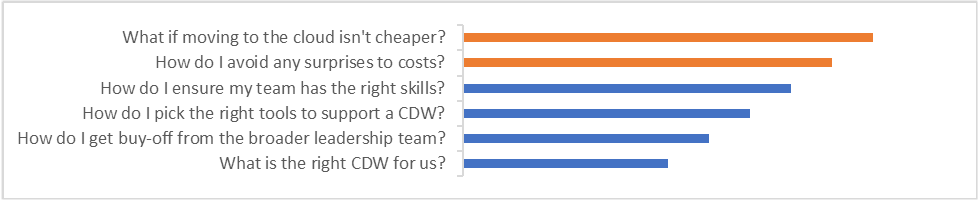

The data and analytics world is going through an exciting time of change and innovation. With products maturing and starting to capture their true potential value, there is a lot of excitement around and investment in new tools. Most CIOs and CTOs, however, have learned from previous hype cycles and are a bit more cautious. From our conversations with leaders in various industries, including high tech, telecom, and utilities, we learned that cost-related elements are often the most common concerns:

Understanding the costs of Snowflake is vital, as they work very differently from those of traditional databases. With the separation of compute and storage, you are no longer calculating costs based on up-front server costs, server maintenance, and license fees.

Snowflake is a fully managed SaaS data platform with no up-front costs—you are only billed for the resources and services you consume. Under Snowflake’s usage-based cost model, understanding in advance how costs are computed and what guardrails can be put in place can significantly help mitigate billing surprises and runaway costs. At the same time, once you understand certain aspects of Snowflake’s pricing and service models, you can uncover additional opportunities to optimize costs while maintaining performance.

How Snowflake costs work

The total cost of using Snowflake is the aggregate of the cost of using compute, storage, and data transfer resources.

• Compute (biggest cost): Warehouse, serverless compute (search optimization, Snowpipe), and cloud service (authentication, metadata management, access control) usage based on running time, warehouse size, and Snowflake edition

• Storage: Priced per terabyte per month and varies slightly by region

• Data transfer: Moving data out of Snowflake or across regions or cloud providers

Best practice #1: Align on use cases and data SLAs with your end stakeholders to define platform requirements.

Best Practice #2: Create a baseline using one of the free online cost calculators available.

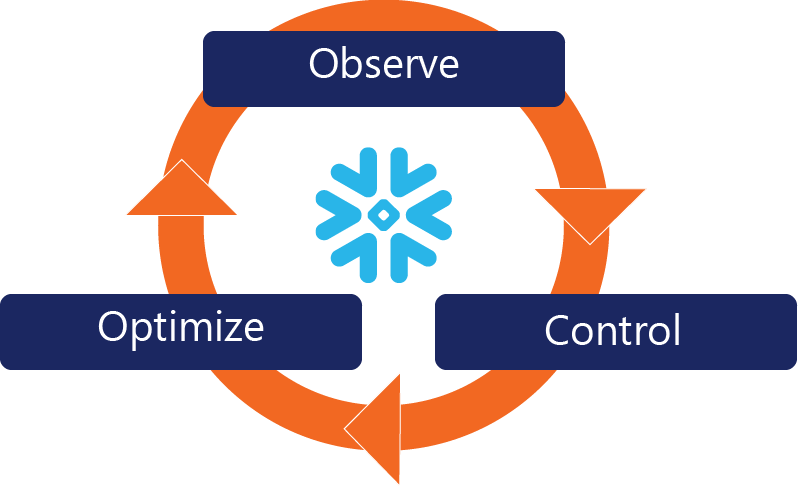

Snowflake’s Cost Management Framework

Snowflake has created a Cost Management Framework that focuses on three areas:

• Visibility: Understanding, exploring, monitoring, and attributing spend

• Control: Putting guardrails in place to limit spend

• Optimization: Identifying and reducing inefficient spend

Best practice #3: Leverage built-in features for monitoring, alerting, and suspending workloads when they are not needed.

Best Practice #4: Embed metadata, tag warehouses by use case, and use third-party dashboards to monitor your platform.

Get your Compute under control

The key to controlling your Compute costs is better management of your warehouses and a strong understanding of your workloads.

Best practice #5: Size and scale your warehouses appropriately based on workload, size, and complexity of data.

Best Practice #6: Customize auto-suspend and auto-resume values to limit unused time while aligning with your workflow’s data availability needs.

Don’t let poor Storage configurations surprise you

While storage isn’t typically one of your larger costs, doing an audit of configuration settings can reduce waste.

Best practice #7: Utilize secure data share and zero-copy cloning, and align time travel with your data retention strategy.

Best Practice #8: Drop transient and temporary objects when you don’t need them and consider external tables/stages for large data sets.

Use a cloud-optimized data management strategy

What worked on your traditional RDMS may not work now that you’re using a cloud-native solution.

Best practice #9: Choose your Snowflake edition and cloud provider carefully so that you’re not overpaying for features you don’t use.

Best Practice #10: If possible, limit use of materialized views, which automatically refresh when the data changes and can result in surprise compute costs.

Best Practice #11: Review your cache and load strategy to ensure you’re not overpaying for coverage you don’t need.

Ready to get started?

These techniques and considerations just start to scratch the surface of cost optimization techniques for your Snowflake Cloud Data Warehouse. It is most important that you give your team the investment of time to plan, analyze, monitor, and optimize your platform to achieve your cost reduction goals.

To learn more about optimizing your Snowflake costs, download our white paper or contact us and we’ll be happy to help you get started.

Claim your competitive advantage

We create powerful custom tools, optimize packaged software, and provide trusted guidance to enable your teams and deliver business value that lasts.

Mick Wagner is a Senior Solutions Architect in the Advanced Analytics practice at Logic20/20.