Big data can give businesses an unparalleled advantage by enabling informed decisions at the right place and the right time. Although data is extremely valuable when used properly, many organizations don’t reach this potential due to one thing: data storage.

Databases have traditionally been stored locally—on premises—in an organization’s servers. Although firms still use on-prem, it has steadily become obsolete for reasons such as:

- Large upfront capital expenditure

- Constant system maintenance responsibility (hardware and software, backups, and recovery)

- Storage and computing limitations

- Significant IT expertise required

- Complex implementation

- Long wait-time to complete installations and updates for each server

- High costs to acquire new servers

The technology industry has evolved to meet this challenge with innovative solutions and platforms built in the cloud, making data storage issues a thing of the past. Cloud-based data platforms separate storage from compute allowing them to take full advantage of the decreasing price of data storage and the ease of scaling compute power.

Migrating existing data to a new warehouse can sometimes be a complicated process, but that’s where we can help.

Client journey at a glance

When a leader in the business intelligence industry wanted better performance and pricing from their data storage, they contacted us to migrate their on-prem SQL data warehouse to Snowflake.

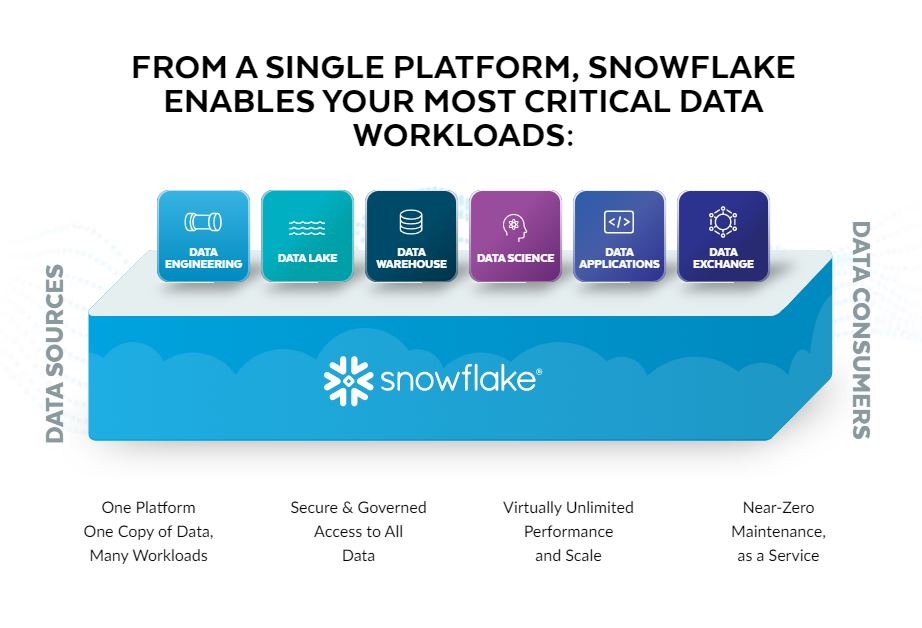

Snowflake is a data storage platform that easily allows users/businesses to scale massive amounts of data and spread computing power across countless machines.

Customer challenge

Our client was using an on-prem SQL Server, which created multiple challenges, including:

- High-operating costs

- Inefficient collaboration between product divisions due to siloed data

- Inability to process data and analytics due to restrictive infrastructure

They wanted to migrate their existing reports and data warehouse to Snowflake.

Approach and solution

After meeting with our client to discuss their most pressing issues, we established the following goals:

- Convert existing SQL objects to Snowflake

- Enhance and update data models

- Decrease costs associated with their rapid data management growth

- Improve processing performance

We worked closely with our client to understand their data, specifically what it was used for and how it moved within their organization. In order to streamline the migration process, we created a template to identify which data should be transferred and how that data was being used in the existing system.

The first step required analyzing current on-prem data to identify unsupported data types and perform a gap analysis. After determining how each data type would be managed in Snowflake, we then created a standardized procedure to merge code for all existing data. From there, we created documentation for easy auditing, automated the migration via scripts based on the standard code template, and tested our results. Our standardized template optimized the data conversion process, making it smoother and faster.

Values and benefits – “the wins”

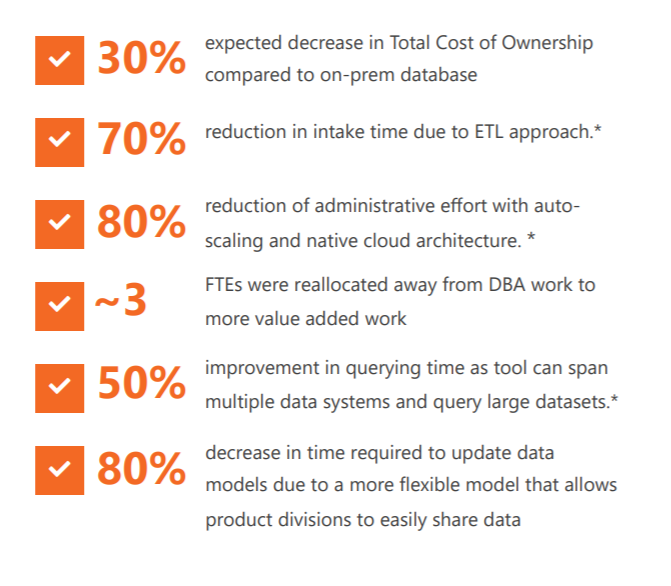

As a result of the successful Snowflake migration and optimization, our client has seen notable benefits:

In addition, our client has seen the following operational benefits:

- They can analyze larger datasets more quickly.

- Their sales and marketing teams are better equipped to predict customer growth and churn, as well as able to easily identify new cross-selling opportunities.

- Their IT team has seen a reduction in operating costs.

Our client expects an improvement in data quality due to the design patterns we implemented, and the platform is being leveraged for more forward-facing data science analysis.

Like what you see?

Mick Wagner is a Senior Manager in the Advanced Analytics practice at Logic20/20.